For over a millennium, human ingenuity has been entwined with the development of tools to aid computation. From the use of the abacus beads to the barely comprehensible entanglement of quantum bits, computing has evolved not only in speed and complexity but also in the way engineers use it to solve problems, and build the world around us.

This is the story of computing told through the lens of an engineer. It begins with the ancient abacus and winds through mechanical and electronic revolutions up to a quantum age that promises to redefine the boundaries of what’s possible.

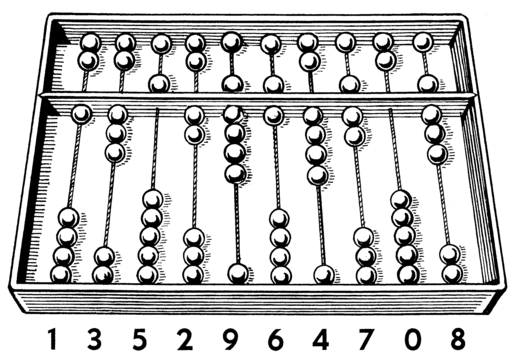

I. The Abacus (c.2300 BCE – 1800s)

The earliest computing device, the abacus, dates back over 5,000 years. Widely used in Mesopotamia, China, and later in Greece and Rome, the abacus turned arithmetic into a tactile, visual process. Though it did not “compute” in the digital sense, it enabled structured numerical reasoning.

The abacus was instrumental in ancient times in building one’s mathematical skills, including those of ancient engineers who built some of the monuments known today.

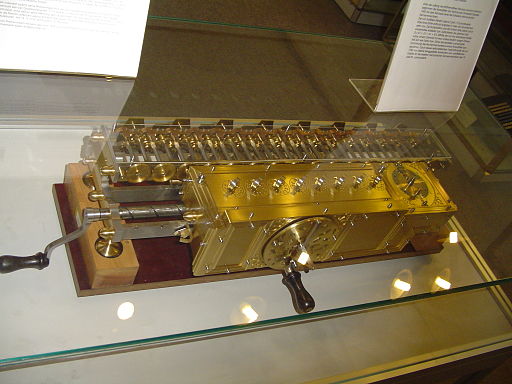

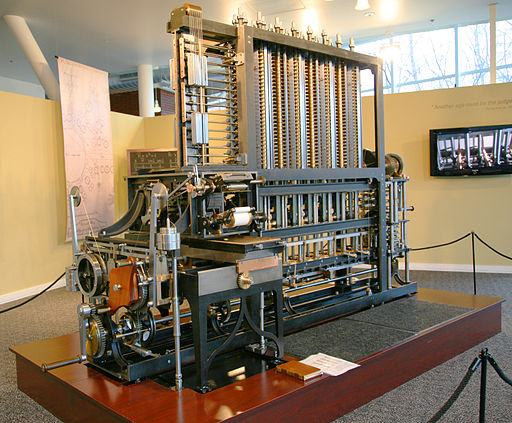

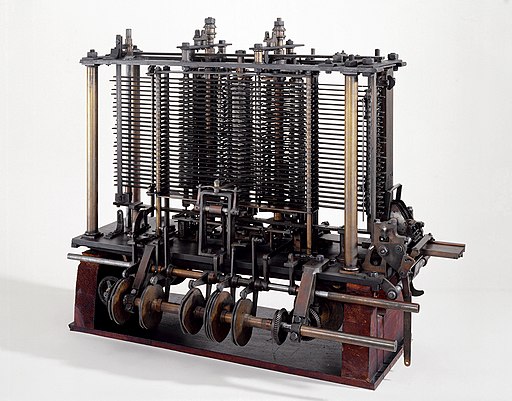

II. Mechanical and Analog Computing (1600s – 1940s)

The Renaissance Period ushered in a mechanical awakening. In the 17th century, Blaise Pascal’s Pascaline and Leibniz’s Stepped Reckoner became the first true calculating machines precursors to automation in engineering.

By the 19th century, Charles Babbage designed the Difference Engine and conceptualized the Analytical Engine, foreshadowing programmable computers. Though never fully built in his time, Babbage’s vision directly influenced modern computing architecture.

The early 20th century introduced analog machines like the differential analyzer, developed by Vannevar Bush at MIT. These machines could solve differential equations essential for modeling stress, fluid flow, and thermodynamics in engineering.

These analog machines allowed engineers to simulate complex, continuous systems. They could model the stress on a bridge or the trajectory of an artillery shell by building a physical analog, turning complex equations into observable mechanical motion.

III. Digital Computing (1946 – 1970s)

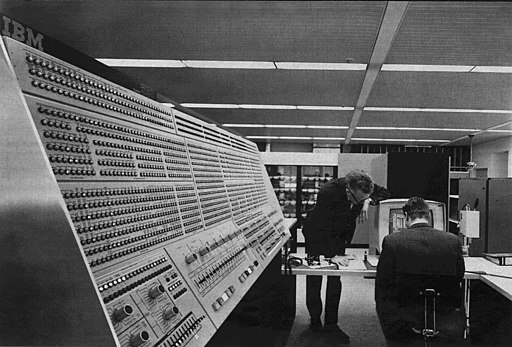

The launch of ENIAC (Electronic Numerical Integrator and Computer) in 1946 marked the beginning of digital computing. It could perform 5,000 additions per second revolutionary at the time. Originally built for defense applications, ENIAC and its successors soon found use in civil and structural engineering.

The advent of transistors and integrated circuits in the 1950s and ’60s allowed for the rise of commercial computers. These machines ran early engineering programs capable of analyzing frame structures, pipe networks, and soil mechanics.

As an example, engineers used IBM mainframes to assist in designing the Apollo program’s launch infrastructure such as calculating load distributions and thermal stresses on launchpads and towers.

IV. Personal Computing (1980s – 1990s)

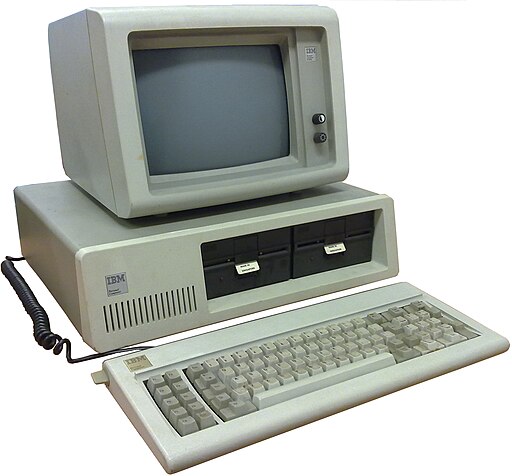

The development of the microprocessor in the early 1970s set the stage for the personal computer revolution. By the 1980s, engineers no longer needed access to mainframes. With software like AutoCAD, ETABS, and ANSYS, they could design, analyze, and document projects on a desktop. These CAD and 3D modeling tools empowered engineers to visualize failure points, iterate quickly, and comply with evolving design codes in real time.

As an example, the design of the Akashi Kaikyō Bridge in Japan, which is the one of longest suspension bridges in the world, relied heavily on early computer modeling for seismic, aerodynamic, and structural analysis.

V. Networked Computing (2000s – 2020s)

The rise of networked computing and cloud-based platforms allowed engineers across the globe to collaborate in real time. Simulations could run in the cloud, drawing on massive datasets to model entire cities, traffic networks, and environmental systems.

Meanwhile, machine learning and AI began to shape engineering workflows from predicting material fatigue to optimizing energy use in smart buildings.

VI. Quantum Computing (2020s – Future)

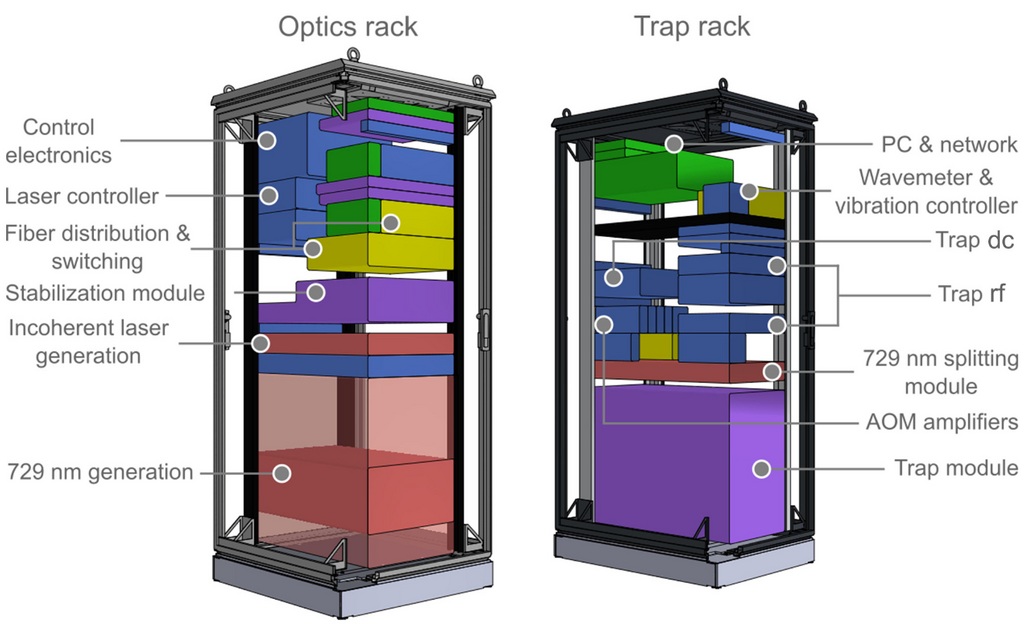

As classical computing approaches the limits of transistor density and energy efficiency, quantum computing introduces a radically new model based on superposition, entanglement, and quantum interference.

Unlike binary bits, qubits can represent multiple states simultaneously. This allows quantum systems to explore complex solution spaces in parallel a feature particularly suited to certain classes of engineering problems.

Potential Engineering Applications:

- Real-time optimization of large-scale civil systems (e.g., transportation networks, utility grids).

- Advanced materials simulation, predicting how novel construction materials behave at molecular levels.

- Fluid dynamics and turbulence modeling, for more accurate and faster simulations of wind, water, and structural interaction.

Conclusion

The story of computing is, at its core, the story of our engineering ambition. It is our desire to understand, model, and reshape the world around us. What began with the simple push of an abacus bead now involves manipulating the probabilistic behavior of quantum particles.

Across every generation, from analog to digital to quantum, computing has not merely accelerated engineering it has transformed how we conceive of engineering itself. And as we enter the quantum era, one thing is certain, we are no longer just building machines to solve problems, we are building machines to redefine them.

References

Samoly, K. (2012). The history of the abacus. Ohio Journal of School Mathematics, 65, 58–65.

GAMBLE S. Quantum Computing: What It Is, Why We Want It, and How We’re Trying to Get It. In: National Academy of Engineering. Frontiers of Engineering: Reports on Leading-Edge Engineering from the 2018 Symposium. Washington (DC): National Academies Press (US); 2019 Jan 28. Available from: https://www.ncbi.nlm.nih.gov/books/NBK538701/

Zakari, Ishaq & Yar, Umaru. (2019). History of computer and its generations..

Please don’t forget to subscribe to our newsletter!

YOU MAY ALSO LIKE TO READ

- A Simple Guide to Runoff ManagementWhether you are working on a small infrastructure project or a large one, rainwater (runoff) is always going to be an issue. That’s why runoff management is a critical part of sustainable land development and environmental protection. As more parts of the world become urbanized and climate patterns continue to… Read more: A Simple Guide to Runoff Management

- A Billion Dollar Problem: What Construction Waste Management Means for the IndustryIt is estimated that nearly 30% of the world’s solid waste comes from construction and demolition (C&D) activities. This includes everything from broken concrete, wood, metal, drywall, bricks, glass, plastics, asphalt, to packaging materials. Most of this waste ends up in landfills, contributing to environmental degradation and increasing costs for… Read more: A Billion Dollar Problem: What Construction Waste Management Means for the Industry

- 5000 Years of Computing: Our Engineering Journey to Quantum ComputingFor over a millennium, human ingenuity has been entwined with the development of tools to aid computation. From the use of the abacus beads to the barely comprehensible entanglement of quantum bits, computing has evolved not only in speed and complexity but also in the way engineers use it to… Read more: 5000 Years of Computing: Our Engineering Journey to Quantum Computing